When America Reelected a Very, Very Sick Man

By Ken Zurski

In November of 1944, Franklin Delano Roosevelt was reelected to a fourth term as president of the United States, an unprecedented, but not unexpected achievement for the New York businessman turned politician who garnered increasing support of the American people during his twelve years in the Oval Office.

In November of 1944, Franklin Delano Roosevelt was reelected to a fourth term as president of the United States, an unprecedented, but not unexpected achievement for the New York businessman turned politician who garnered increasing support of the American people during his twelve years in the Oval Office.

Although a handful of past presidents had tried, none had served more than two terms, a limitation the nation’s first president General George Washington had advised others to follow. But at the time, there were no restrictions. FDR, as he was famously called, broke new ground when he won a third term. A fourth term he felt during a time of war was just as important.

The voting public agreed. Roosevelt, a Democrat, beat Republican challenger Thomas Dewey in what can be considered even by today’s standard as an overwhelming victory.

The voters, however, had no idea – at least not officially – that they had elected back into office a man who was living on borrowed time.

In the months, even years, leading up to the 1944 election, the American people heard rumors and speculation about the president’s health. Roosevelt suffered from polio which limited his mobility, but in 1944 his appearance seemed to worsen. He looked feeble and weak; his eyes were often red and swollen; and his movements were slow and calculated.

Behind the scenes, there were concerns, but no immediate panic. Dr. Frank Howard Lahey, a respected surgeon known for opening a multi-specialty group practice in Boston, was brought in for a consultation. Lahey’s connection with the Navy’s consulting board led him to the White House. After a careful examination, Lahey informed Roosevelt that he was in advanced stages of cardiac failure and should not seek a fourth term. Even went so far as to warn Roosevelt that if he did win reelection, he would likely die in office. Roosevelt listened but did not follow Lahey’s advice. He felt it was his duty to continue.

In April of 1945, less than three months after being sworn in for the fourth time, Roosevelt was dead.

The president’s death took most Americans by surprise. That’s because shortly after being reelected, Roosevelt’s personal physician at the time, the surgeon general of the U.S. Navy, Dr. Ross McIntire, helped quell public fears by proclaiming FDR was feeling fine. But others could visibly see the president’s decline.

At the White House, Vice President Harry Truman was sworn in and questions were asked: Why didn’t the voting public know the truth about Roosevelt’s health?

In hindsight, Lahey’s report seemed to be the most truthful and forewarning. But information between a doctor and client is private and confidential. The White House only asked Lehay to consult the president. Whether the details were released was up to Roosevelt and his staff. The report was concealed and only came to light nearly six decades later. Lehay himself could have spoke up, but chose to remain silent and honor the patient-doctor confidentiality agreement.

Instead, what was disclosed to the public was mostly misleading. It included a glaringly deceptive assessment of the president’s condition in the months before the election.

In March 1944, the White House announced a report by Dr. McIntire, which claimed the 62-year-old Roosevelt was looking “tired and haggard” due to the stress and strain of the war years and nothing more.

“In my opinion,” McIntire added, “Roosevelt is in excellent condition for a man of his age.”

He was either astonishingly wrong or lying.

For a Long Time, Nearly all U.S. Presidents Had Facial Hair. And Then They Didn’t

By Ken Zurski

Abraham Lincoln, the 16th President of the United States, was the first commander-and-chief to have facial hair.

Abraham Lincoln, the 16th President of the United States, was the first commander-and-chief to have facial hair.

Surprised?

Actually by being the first to sport a beard, Lincoln started a trend that lasted nearly 50 years. But even Lincoln’s beard was an afterthought. Lincoln never had facial hair as an adult and only let his whiskers go after a receiving a letter from an 11-year-old girl named Grace Bedell who suggested the president-elect should grow one. “For your face is so thin,” she wrote.

Lincoln reluctantly obliged.

After Lincoln, and in the eleven presidencies that followed, only Andrew Johnson and William McKinley chose to go clean shaven. The rest had either a beard, mustache or both. Chester Arthur was one. The 21st president, had a classic version of sidewhiskers, an extreme variation of the muttonchop, or side hair connected by a mustache.

After Lincoln, and in the eleven presidencies that followed, only Andrew Johnson and William McKinley chose to go clean shaven. The rest had either a beard, mustache or both. Chester Arthur was one. The 21st president, had a classic version of sidewhiskers, an extreme variation of the muttonchop, or side hair connected by a mustache.

But it didn’t last.

The last president to have facial hair is William Howard Taft (mustache) in 1909.

Woodrow Wilson, who was always impeccably coiffed and dressed, was next. President Wilson shaved everyday and ended the trend.

Woodrow Wilson, who was always impeccably coiffed and dressed, was next. President Wilson shaved everyday and ended the trend.

Many claim the invention of Gillette’s safety razor in the early 1900’s had something to do with the change. Suddenly shaving was easier and facial hair in general went out of style. Plus, the military banned beards too. This was not the case during the Civil War or the Spanish -American War, led in part by a future president, Teddy Roosevelt, who sported a bushy mustache.

Regardless of why it ended, from Wilson on, rarely a stitch of facial hair has been spotted on a president’s face. (You can add many vice-presidents to that list too.) And despite a surge in popularity for beards today, that likely wont change with the election of the 45th president. Donald Trump has never sported facial hair and well, Hillary Clinton, who could become the first woman president, makes the point moot.

But even something as trivial as a beard has controversy.

Some argue that John Quincy Adams, not Lincoln, should be considered the first president to have facial hair. If so, that would pull the history of presidents and hair growth back nearly four decades.

Some argue that John Quincy Adams, not Lincoln, should be considered the first president to have facial hair. If so, that would pull the history of presidents and hair growth back nearly four decades.

But not to be.

While Adams certainly had hair on his face, his chops, which extended off his ears and sloped down to his chin, were considered sideburns instead.

Before There Were Child Labor Laws, There Were Spraggers.

By Ken Zurski

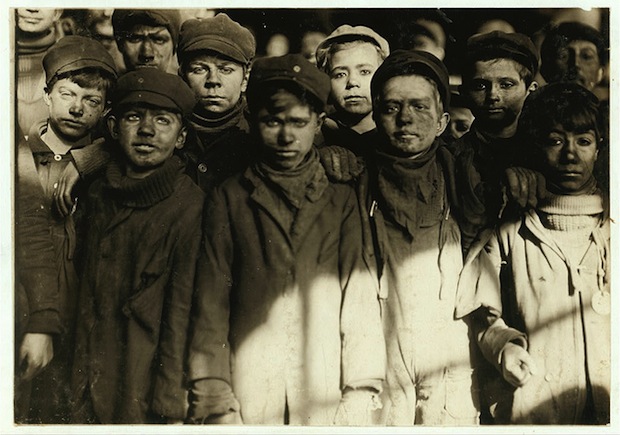

In the mid 19th century, coal mining rail cars used to carry large amounts of the valuable black rock between underground multi-leveled work chambers had no braking system. If one went down an incline too quickly, it simply kept going like a roller coaster until it derailed and spilled its contents in the process. Since miners were paid by the weight of cars they unloaded, this would cost them time and money. To keep this from occurring, boys as young as six years of age were hired to help the cars come to a stop.

In the mid 19th century, coal mining rail cars used to carry large amounts of the valuable black rock between underground multi-leveled work chambers had no braking system. If one went down an incline too quickly, it simply kept going like a roller coaster until it derailed and spilled its contents in the process. Since miners were paid by the weight of cars they unloaded, this would cost them time and money. To keep this from occurring, boys as young as six years of age were hired to help the cars come to a stop.

They were called “spraggers.”

A sprag was a stick of wood, not quite as long as a baseball bat. Each boy carried several sprags and were positioned in areas where the cars, sometimes eight in a row, would roll down the slope. The boys would run alongside and jab the sprags into the spokes of the wheel. The sprags acted as brakes, slowing down the car until it stopped.

If this sounds dangerous, it certainly was. The sprags would get caught in the spoke and take an arm with it. Some boys lost fingers, or even hands. Some of the more adventurous types would jump on the side of the rolling car for fun and hold on while another jabbed the sprag in the wheel. This broke up the monotony of the day, but if the car failed to stop, the unfortunate passenger usually went with it, careening out of control until it broke from the rail and smashed into the wall. Of course, being a “spragger” meant you were one the fastest and most agile of the young crew. Other boys would work in the picking room as “breakers,” sorting refuse from the coal; still others opened and closed heavy doors as the coal cars approached, called “nippers.”

For many boys of this era, working in the coal mine was an honor bestowed upon by their ancestors. After all their father and grandfathers had grown up in the mines and in all likelihood their future as a fellow miner was already set. You can imagine the mothers, even if they protested, had little say in the matter. The coal mining industry was literally a “well-oiled machine” that worked if all parts were in place – even at the expense of using children to keep it moving. “He never got used to the noise, the dust, the threat of danger,” writes author Susan Campbell Bartoletti in her book Growing Up In Coal Country, “He was proud to earn money for his family. That was the life of a miner’s son.”

For many boys of this era, working in the coal mine was an honor bestowed upon by their ancestors. After all their father and grandfathers had grown up in the mines and in all likelihood their future as a fellow miner was already set. You can imagine the mothers, even if they protested, had little say in the matter. The coal mining industry was literally a “well-oiled machine” that worked if all parts were in place – even at the expense of using children to keep it moving. “He never got used to the noise, the dust, the threat of danger,” writes author Susan Campbell Bartoletti in her book Growing Up In Coal Country, “He was proud to earn money for his family. That was the life of a miner’s son.”

Although no safety records were kept back then, we can assume there were deaths, perhaps many. Eventually in the late 1800’s state laws were passed that prevented children under twelve to work in a mine. In 1902, that was raised to fourteen. But for many tight-knit mining communities there were no birth certificates, so boys younger than fourteen were passed off as simply “small for their age.”

Although the act of using children in dangerous places was already being condemned by early trade unions and women’s groups, the movement gained more traction in 1912. That’s when The Children’s Bureau was created within the Department of Commerce and Labor and later transferred to the nearly created Department of Labor.

By then reports of children being maimed or worse were surfacing. One boy, Manus McHugh, whose job it was to oil the mining breaker machinery, reportedly wanted to finish the day so badly he attempted to oil the machine while it was still running. His arm got caught first. In the investigation that followed. McHugh’s death was blamed on disobedience. “Boys will be boys and must play,” it stated. “Unless they are held in strict discipline.” No legal action was taken.

The inaugural federal child labor law known as the Keating-Owen Child Labor Act was signed by President Woodrow Wilson on September 1, 1916, a Friday, and ironically the start of the long Labor Day weekend, since Labor Day always fell on the first Monday of September. But the bill only regulated child labor by banning the sale of products used by factories that employed children under fourteen. It was ruled unconstitutional in 1918.

The first minimum age requirement of a minor, part of the Fair Labor Standards Act, was not federally mandated until 1938.

Father Marquette Mentioned a Parrot Among Other Strange Birds in Illinois

By Ken Zurski

In 1674, while exploring the Illinois River for the first time, French Jesuit missionary Father Jacques Marquette wrote in his journal: “We have seen nothing like this river that we enter, as regards its fertility of soil, its prairies and woods; its cattle, elk, deer, wildcats, bustards, swans, ducks, parroquets, and even beaver.”

Certainly the reference to parroquets, or perroquets, (French for parrot) raises some eyebrows. But a species called the Carolina Parrot, now extinct, did inhabit portions of North America, as far north as the Great Lakes, as early as the 16th century.

More puzzling, however, is the mention of the bustard.

Even the Illinois State Museum in the state’s capitol of Springfield questions this unusual reference.

“What is a Bustard?” the Museum sign asks in an exhibit showcasing birds native to Illinois, then answers: “We’re not sure.”

Of course, the bustard is a real bird. In Europe and Central Asia it is more commonly known. In North America? It just doesn’t exist. But did it at one time? According to the Museum’s notes, several French explorers described bustards as being common game birds of Illinois and said they resembled “large ducks.”

Large indeed.

A Great Bustard can stand 2 to 3 feet in height and weigh up to 30 pounds making it one of the heaviest living animals able to fly. Its one distinctive feature, besides its size, is the gray whiskers that sprouts from its beak in the winter.

Father Marquette was more a man of the cloth than a scientist. His mission was to preach to the Illinois Indians or “savages” as he calls them. Along the way, however, he described the scenery and game in detail. The “bustard” comes up quite often in his journal. He even refers to hunting them, possibly eating them too. “Bustards and ducks pass continually,” he wrote.

Perhaps, as some suggest, Marquette was describing a common wild turkey. His recollections seem to imply they were airborne, which wild turkeys can do, despite the myth that they cannot fly (the “fattened” farm turkey – the one we use for Thanksgiving – does not fly).

The Illinois State Museum goes even further by speculating that the bird Marquette was referencing was not a bustard at all, but the Canada Goose which is similar in size and appearance to the Great Bustard.

But, as the Museum concedes, even that is “open to question.”.

The Ten-Million Dollar Question

By Ken Zurski

For a man whose mission it was to relinquish his entire fortune before his death, Andrew Carnegie still had plenty of money left when he passed in 1919 at the age of 83. That’s no indictment of a man who built a massively successful business, became the richest man in America, and devoted his later years to giving it all back. It was a noble thing to do. But Carnegie had made so much capital that even he found it difficult to allocate the funds sufficiently.

So he asked for help.

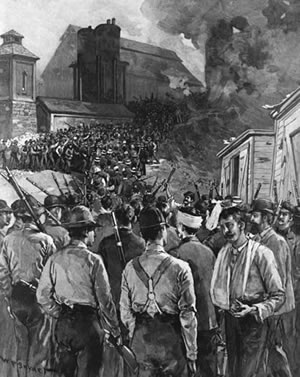

Carnegie grew up poor in Scotland, came to America, and amassed millions in the steel industry. Along the way, he made just as many enemies as dollars. Like many so-called tycoons of his time, Carnegie was accused of cutthroat practices which sacrificed workers’ rights for the bottom line. The Homestead Strike of 1892 was due to a dispute between steel workers at Carnegie’s Homestead, Pennsylvania plant and management which refused to raise workers’ pay despite a windfall in profits. The riot that followed is still one of the bloodiest labor confrontations in history. Ten men were killed in the melee and Carnegie who continued production with nonunion workers, was blamed for the uprising.

Carnegie viewed it differently than the workers. He believed that reducing production costs meant lower prices to consumers. Therefore, he theorized, the community as a whole profited, not the unions. It was a slippery slope. But, many asked, was it worth men dying for?

Carnegie, of course, thought of himself as a benefactor and did not apologize for becoming a wealthy man. When he retired, however, he made it clear that being rich was only relative: “Man must have no idol and the amassing of wealth is one of the worst species of idolatry! No idol is more debasing than the worship of money! Whatever I engage in I must push inordinately; therefore should I be careful to choose that life which will be the most elevating in its character.”

Carnegie didn’t hand out money haphazardly. He spent it on things and places that moved him. Among other worthy causes, the most prominent were funds for more schools – especially in low income communities – and the building or expansion of public libraries. In each case, he released the money only after specific demands were met, each one designed to make sure none of it went to waste. Carnegie had final approval.

In 1908, at the age of 72, with millions more left to give, Carnegie wrote a letter to people he admired. It was in effect an offer disguised as a question: “If you had say five or ten million dollars (close to 5-billion today) to put to the best possible use,” Carnegie asked, “what would you do with it?” Many of the correspondence were business leaders and some were presidents of institutions already bearing the Carnegie name. Most responded in kind that the money should be used to continue fellowships. The letters were an indication that the burden of giving away a fortune was weighing heavy on Carnegie’s mind.

“The fact is that after spending about $50-million on libraries, the great cities are generally supplied and I am groping for the next field to cultivate,” Carnegie wrote President Theodore Roosevelt. “You have a hard task as present but the distribution of money judiciously is not without its difficulties also and involves harder work than ever acquisition of wealth did.”

Carnegie wrapped up the letter by pointing out the absurdity of that last line. “I could play with that and laugh,” he noted.

In the end, of course, Carnegie left enough money behind to take care of his wife and daughter. His loyal servants and caretakers were awarded pensions and his closest friends received substantial annuities.

Carnegie gave away an estimated $350 million dollars, but for the rest, he had one final request. After the will segments were dived up, nearly $20-million remained in stocks and bonds.

He bequeathed that amount to the Carnegie Corporation organization he proudly founded, and which still exists today.

(Sources: Andrew Carnegie by David Nasaw; various internet sites)

UNREMEMBERED FRUIT: The Rise and Fall of the ‘Woodpecker Apple’

By Ken Zurski

Meet Loammi Baldwin.

He was a colonel in the Revolutionary War.

He commanded several regiments during the battles of Concord and Lexington and accompanied General George Washington when the future president famously crossed the Delaware River to surprise the Hessian’s in Trenton, New Jersey.

But that’s not all. Baldwin was also a member of the Academy of Arts and Sciences who like Benjamin Franklin conducted experiments in electricity. He was elected to the Massachusetts General Assembly and as an engineer was instrumental in pioneering a waterway that connected Boston Harbor to the Merrimac River, known as the Middlesex Canal.

Yes, Col. Baldwin is certainly a man who held many distinguished titles and honors. For some, he is considered to be the “Father of Civil Engineering.” But today he is best remembered – or unremembered, if you will – for one thing: an apple.

Let’s backtrack.

While building the Middlesex Canal, Baldwin visited the farm of a man named William Butters. It was on a recommendation from a friend that Butters had grown the sweetest apple in all of New England. Butters told Baldwin that the tree was frequented by woodpeckers who in addition to the apples would eat tree grubs and other damaging insects. Butters called the apple a “Woodpecker” after the bird, or ‘Pecker for short. Others had dubbed it “Butters Apple.”

Baldwin was so impressed that he planted a row of ‘Pecker trees near his plantation home in Woburn, Massachusetts.”The tree was a seedling,” a historian wrote of Baldwin’s interest, “but the apple had so fine a flavor that he returned at another season to cut some scions, and these being grafted into his own trees, produced an abundant crop.”

After Baldwin’s death in 1807, the ‘Pecker Apple was officially named in his honor and the Baldwin Apple quickly became the most popular fruit in New England. It’s easy to see why. The Baldwin was smaller than most red apples are today, but its skin was mostly free of blights. Farmers loved the Baldwin because they could harvest large crops and transport them readily with little or no deterioration. The Baldwin’s were also a good apple to make into a rich, sweet cider. The hard texture was perfect for making pies. “What the Concord is to the grapes, what the Bartlett has been among pears, the Baldwin is among apples,” the New England Farmer described in 1885.

After Baldwin’s death in 1807, the ‘Pecker Apple was officially named in his honor and the Baldwin Apple quickly became the most popular fruit in New England. It’s easy to see why. The Baldwin was smaller than most red apples are today, but its skin was mostly free of blights. Farmers loved the Baldwin because they could harvest large crops and transport them readily with little or no deterioration. The Baldwin’s were also a good apple to make into a rich, sweet cider. The hard texture was perfect for making pies. “What the Concord is to the grapes, what the Bartlett has been among pears, the Baldwin is among apples,” the New England Farmer described in 1885.

Unfortunately, the Baldwin’s dominance wouldn’t last. Too many severe winters took its toll.

In fact, in one particularly harsh year, 1934, nearly two-thirds of all apple trees in the northeast were destroyed. The next year the state of Maine helped growers replenish their decimated orchards, but only Macintosh and Red Delicious seeds were offered. The Baldwins were just too delicate to replant in large numbers. Still some farmers grew small crops to maintain the rich cider.

So Baldwin’s survive today in small numbers.

But there’s one more interesting note about Loammi Baldwin. Besides the name, the “Father of Civil Enginerring,” has another connection to apple folklore. Baldwin it turns out is the second cousin of Johnny Chapman, another Massachusetts man and traveling missionary whose work included the planting of apple trees throughout the expanding frontier.

We know him today not as Johnny Chapman, but Johnny Appleseed.

The Two-Week, Two-Weight Olympic Boxer

By Ken Zurski

Oliver Leonard Kirk has two gold medals from one Olympic Games. That’s not unusual in today’s Olympic climate. Many athletes have done it especially in swimming and track and field events.

But Kirk was a boxer.

In the slipshod third Olympiad held at the St Louis World’s Fair in 1904, due to a limited amount of competitors, especially in boxing, Kirk was allowed to compete in two weight classes.

So as a featherweight Kirk faced a slightly larger opponent in Frank Bee Haller, another American. Kirk was a brawler and won. But was it a fair fight? Haller was a tough competitor, but many felt he was taxed from an earlier bout, while Kirk had the advantage of a bye in the first round.

Regardless, Kirk took the gold.

Kirk than spent a week losing 10 pounds and as a bantamweight faced the slighter smaller George Finnegan. A week before, Finnegan had beat Miles Burke to win the flyweight gold medal.

Finnegan quickly added 10 pounds to battle Kirk.

Finnegan may have been pressured to move up and fight Kirk. That’s because there were no other competitors in the bantamweight division. Kirk had made the weight, but no one to fight. So Finnegan put the extra load on his frame.

It showed.

Kirk landed more punches and won his second gold medal.

The Unlikely and Unconventional Winner of the 1960 Olympic Marathon

By Ken Zurski

By Ken Zurski

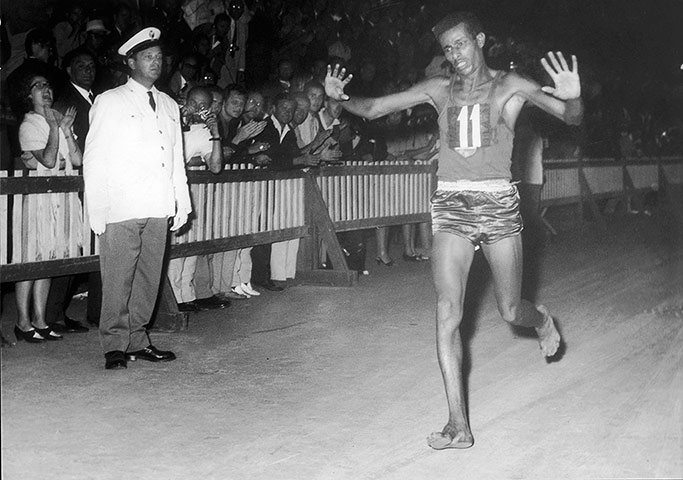

At the 1960 Summer Olympic Games in Rome, Gordon McKenzie was one of three U.S runners entered in the prestigious marathon, a race the Americans were given only an outside chance to win.

Before the start of the race, however, McKenzie noticed a “skinny little African guy” in the field. “There’s one guy we don’t have to worry about,” he said to another entrant. The guy he was referring too was Abele Bikila from Ethiopia.

McKenzie knew that in the past African runners didn’t fare so well in long distance races. But Africa, the continent, was changing. New nations were forming and more athletes were competing like Bikila, who was a soccer player and soldier before becoming a runner. Bikila was also used to training in the extreme heat, something many of the other runners were not.

McKenzie knew that in the past African runners didn’t fare so well in long distance races. But Africa, the continent, was changing. New nations were forming and more athletes were competing like Bikila, who was a soccer player and soldier before becoming a runner. Bikila was also used to training in the extreme heat, something many of the other runners were not.

On the day of the race, September 10, temperatures were expected to be near 90 degrees. So a change was made to start the race at twilight and end in “torch-lit” darkness by the Arch of Constantine and not in the Olympic stadium, a first for the games.

By the time it was over, Bikila had stunned the crowd and won the race convincingly – shattering an Olympic record in the process. A fitting end to the Games which had already introduced a track star named Wilma Rudolph and an unknown young boxer at the time named Cassius Clay.

Bikila became the first runner from Africa to win an Olympic marathon and in hindsight set the stage for the dominance of African marathoner’s to come.

But it’s how he won that most impressed.

Bikila had to throw out the badly frayed sneakers he arrived with and dismissed a last minute replacement pair because it didn’t fit properly.

He had nothing left to wear.

So like he had done many times in training, Bikila started and completed the race in his bare feet.

That’s Not George Washington on the First Dollar Bill

By Ken Zurski

In the summer of 1861, after the Battle of Bull Run disproved the theory that the Civil War would end quickly, U.S. Treasury Secretary at the time Salmon P. Chase turned to the option of paper money to help pay the Union soldiers. This included the first government-issued dollar bill.

A bill which looked much different than it does today.

The man on the front of the original dollar bill was Chase himself who did the honors of appointing his own likeness to the first “greenbacks” (named for the green ink used on the back, with black ink in front).

Chase was a political rival of Lincoln who became part of his cabinet, oftentimes disagreeing with the president and threatening to quit on numerous occasions until Lincoln diffused the matter – usually with a joke.

Gold and silver coins were popular, but at the onset of the Civil War, to help fund it, Congress authorized the issue of demand notes worth $5, $10 & $20. The notes could be redeemable by coin. The $1 bill soon followed.

Chase contributed to the design of the new dollar bill and having presidential aspirations himself thought his image on its face would help the cause. The fact that he ran the Treasury Department was a strong argument for inclusion.

Eventually, Chase was replaced by George Washington on the dollar bill.

But in 1928, more than 50 years after his death, Chase was honored again with his picture on the newly minted $10,000 bill. The big bills, like the $1,000 (Cleveland), $5,000 (Madison), and $10,000, were used mainly for transfers between banks. Even a $100,000 bill (Wilson), the largest single denomination ever, was printed in 1934 for this same purpose.

Although it went out of circulation, the $10,000 bill is still considered legal tender and banks would be glad to exchange it if collectors were crazy enough to pass on the market price which is ten times or more its face value.

The original $1 dollar bill, with Chase’s likeness, while not as rare, is still collectible. Mint condition bills can fetch up to $1000. Most are worth between $100 and $300.

Chase is also remembered to this day by a large bank, now a merged institution, with his name still in its title.

When the ‘Stache Ruled the Pool

By Ken Zurski

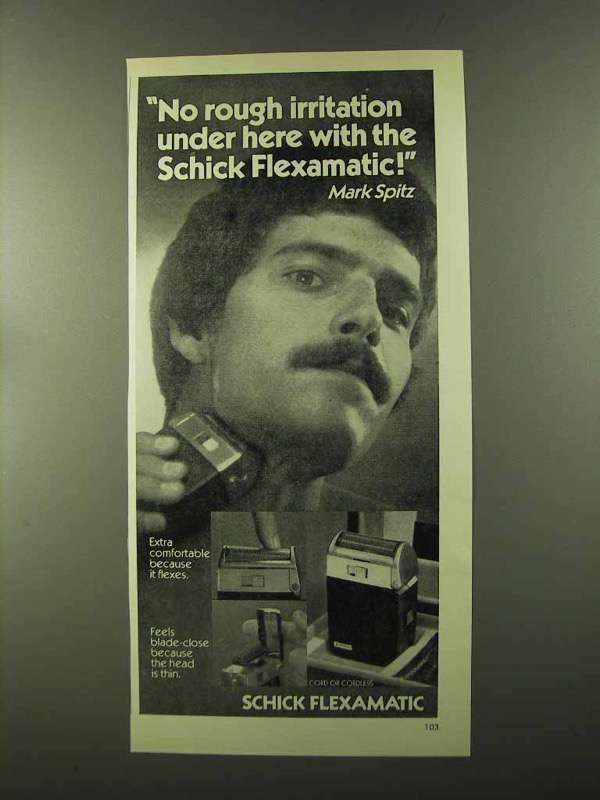

In August and September of 1972, at the Summer Olympics in Munich, West Germany, American swimmer Mark Spitz did what no other Olympian had ever done up to that point, win more consecutive gold medals in a single games.

In this case, it was a “golden” seven.

It could have been only six.

Spitz was satisfied with his unprecedented six-for-six gold medal streak and considered bowing out of his last scheduled race, the 100m freestyle, after being edged by rival and current world record holder in the event Michael Wenden of Australia in both the prelims and the semis.

Sptiz thought a loss would tarnish his previous accomplishments. But his coach convinced him that since the 100m freestyle was the premier swimming event of the games, Wenden would be crowned the fastest swimmer in the world.

Spitz raced, won, and beat Wenden’s world record by nearly a second.

“There is no money in swimming like there are in other sports,” Spitz said about his record making accomplishments. “The medals don’t have much monetary value. I’ll hang them on the wall someplace.”

But Spitz’s fortunes would change.

After the games, Spitz became an instant celebrity and one of the first Olympians to profit off his success by picking up major product endorsements from swim trunk maker Speedo and razor king, Schick.

The latter thanks to that famous mustache, Spitz’s trademark.

Spitz grew up in Honolulu Hawaii and became a competitive swimmer at an early age. He sported the mustache in college on a bet from a coach that he couldn’t grow one.

After the games, which were marred internationally by the Israeli hostage tragedy, the poster of Spitz sporting his mustache and seven gold medals around his neck became a best seller.

The ‘stache, however, was a source of curiosity and contention for other competitors.

Even the coach of the Russian team went so far as to ask Spitz if he thought his facial hair slowed him down. Spitz told him it actually streamlined water around his mouth, making him swim faster. Today’s competitive swimmers would disagree, since they prefer no facial or body hair in general except perhaps on their heads which is usually covered by smooth swim caps. In Spitz’s era, swimmers didn’t wear caps on their heads.

Sptiz amount of gold won at a single games was finally broken by Michael Phelps at the Beijing Games in 2008.

But Phelps, who won 8 gold medals without breaking a world record in one event, gives Spitz a lasting distinction of besting the world record in every event he entered, even the seventh and final race of his swimming career, the 100m freestyle, the event he considered skipping.

“You can bet your ‘omph-pah’ horn or your last stein of Munich beer, or both, that it will be seven gold medals before the sun sets,” wrote UPI sports writer John G. Griffin on the day of that last race.

He was right.